The team started working on adaptive audio after the world switched to video conferencing and eventually, hybrid work due to the pandemic. At the time, it was challenging to get new meeting room hardware due to supply chain shortages. “Plus, many organizations didn’t have enough video conferencing rooms to begin with, or they didn’t have the resources for dedicated meeting room equipment,” Huib says.

Teams needed to be able to create ad-hoc meeting spaces and without the inconvenience of crowding around a single laptop. But enabling everyone to join from their own devices while silencing the “screams” is much harder than it sounds.

“Imagine a movie theater audio setup. You have multiple speakers around you, and it’s a nice audio experience because they’re all cabled to the same sound source, so they play out in an intended synchronicity,” Meet Software Engineer Manager Henrik Lundin says. “Now, if you have several devices in the room playing the same audio without synchronization, it would sound horrible. You’re getting multiple copies of the same audio — like you’re standing in a large cathedral. And likewise, when you speak in a room with multiple microphones on different devices, they pick up sound at the same time, but they’re not on the same clock.”

Then there’s the echo problem. You’ve probably noticed that you’ll sometimes get an echo of your own voice back when using video conferencing tools. “The reason that you don’t get that all the time is because the devices that run meetings have an echo canceller inside,” Henrik says. “It’s a signal processing algorithm that tries to figure out which part of the audio from the microphone signal is actually just coming from the speakers in the same device and which part of it is your voice. This gets 10x harder when you have multiple laptops in the same room playing the audio and feeding into each other’s microphones.”

To solve this audio puzzle, the team spent a lot of time getting in the same room and figuring out how to get their laptops to know they were next to each other. At first, they tested having people join specific preset groups within the meeting. “This was obviously error prone, but it helped us test out the experience of synchronizing all the laptops’ microphones and speakers,” Henrik says.

Then they tried using ultrasound. By emitting high-frequency sounds undetectable to the human ear, the laptops can identify the presence of other laptops in close proximity and begin acting together as a group. This eliminated the need for users to manually configure their devices or select the room they were in. “But it was really tricky because the ultrasound needed to work reliably on any device, and be precise — if audio leaks from the room next door, it shouldn’t think you’re in the same room,” Henrik says. The team adopted a new type of ultrasound to increase accuracy, and tuned the frequency and volume to optimize reach without being audible.

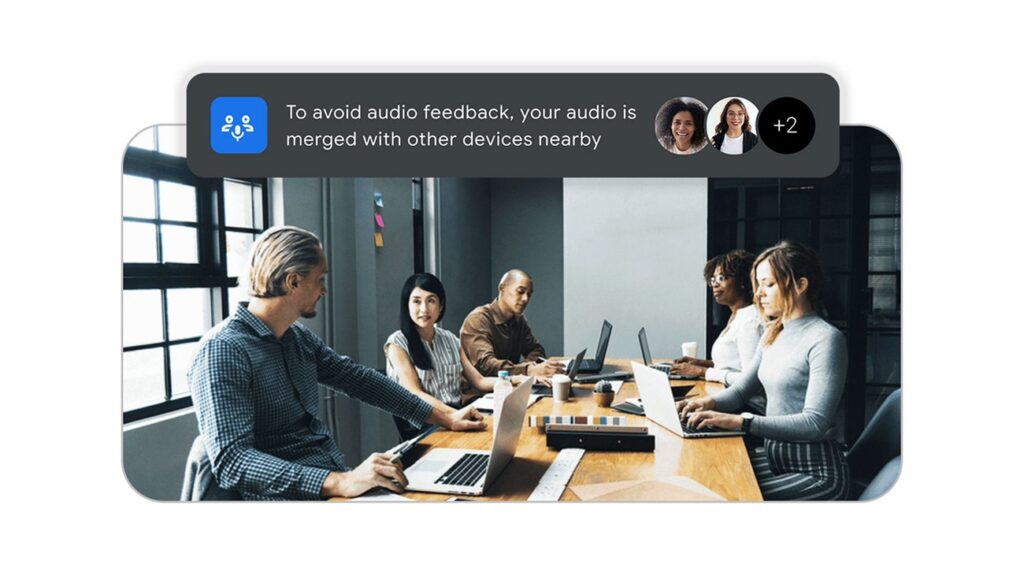

Once Meet detects multiple laptops are present, adaptive audio activates automatically, synchronizing all the laptops’ microphones and speakers without turning any speakers off. It switches between microphones depending on who’s talking to prevent feedback and echo. Additionally, Meet uses backend processing and a cloud denoiser to enhance audio quality and remove background noise before transmitting audio to other participants.

All across Google, meetings every day already use adaptive audio — many without participants even realizing it. “It’s one of those technologies that removes the cognitive load from the user. They don’t have to wonder if they’re in the right setup before they join a meeting,” Meet Interaction Design Lead Ahmed Aly says. “Regardless of how complex and marvelous the engineering behind it is, from the end user perspective, whenever they open their laptop and join a meeting, it just works.”